Explore the importance of ethics in data science, responsible AI implementation, and safeguarding data privacy. Learn how to uphold ethical standards.

Data ethics represents a foundational principle in the field of data science, emphasizing the moral obligations associated with the collection, analysis, and application of data. As technology continues to advance, the integration of artificial intelligence (AI) into various sectors has amplified the importance of ethical frameworks that govern the responsible use of data. Responsible AI encapsulates not only technical proficiency but also the ethical considerations that accompany data-driven decision-making processes.

The significance of data ethics extends beyond compliance with legal regulations; it is about establishing trust and integrity in how data is handled. In environments where vast amounts of personal information are processed, individuals and organizations must navigate complex ethical dilemmas that can arise from data misuse or misinterpretation. A breach of ethical standards can lead to significant consequences, including reputational damage and loss of consumer trust. Thus, establishing robust data ethics is paramount to ensuring accountability in AI systems.

Data ethics should be embedded in every stage of data handling, from data collection to algorithm development and deployment. This involves considering diverse perspectives and implications, such as fairness, transparency, and privacy. By addressing these ethical dimensions, data scientists and practitioners can create AI systems that reflect societal values and protect the rights of individuals. As the discipline of data science continues to evolve, the commitment to ethical practices must remain a priority, guiding innovations in a manner that aligns with public good and moral responsibility.

In recent years, the integration of artificial intelligence (AI) into various sectors has highlighted the critical need for responsible AI usage. As AI technologies advance, the ethical implications of their application come to the forefront, emphasizing the importance of adherence to core principles such as fairness, accountability, and transparency. These guidelines serve to ensure that data scientists and developers not only produce effective AI solutions but also align their work with ethical standards that society demands.

Fairness in AI revolves around ensuring that algorithms operate without bias towards any particular group. For instance, the controversial use of facial recognition technology has drawn significant criticism for disproportionately misidentifying individuals from marginalized communities. Instances like these underscore the necessity for data scientists to evaluate the datasets they use to train algorithms, ensuring that they are representative and do not encode existing societal biases. Implementing fairness checks can help mitigate the risk of discriminatory outcomes.

Accountability involves recognizing and owning the impacts of AI decisions. In several high-profile cases, companies have faced backlash for the consequences of deploying AI systems without proper oversight. For example, the use of AI-driven hiring tools that inadvertently favored certain demographics over others raised significant ethical concerns, leading to calls for greater transparency and accountability. By implementing rigorous testing and monitoring processes, data practitioners can uphold their responsibility to stakeholders affected by AI outputs.

Transparency is vital for fostering trust in AI systems. Users should be able to understand how decisions are made, particularly when they affect people’s lives. Organizations should strive to make their AI models interpretable, providing clear documentation and open lines of communication regarding the technology’s functionalities. By adhering to these ethical principles, data scientists can contribute to a responsible AI landscape that prioritizes the welfare of individuals and society as a whole.

Data science has emerged as a powerful tool for extracting insights and driving innovation across various sectors. However, at the core of this discipline lies the pivotal issue of privacy. Privacy in data science relates to the management and protection of personal data that can identify individuals, directly or indirectly. Personal data encompasses a wide array of information, including names, addresses, email IDs, and even behavioral data gathered from online activities. If this data is mishandled, it can lead to significant violations of privacy, resulting in potential harm to individuals and organizations alike.

As data scientists harness vast amounts of information for analysis, it is crucial to understand the ethical considerations surrounding its use. The misuse or unauthorized access to personal information not only breaches trust but can also infringe on legal and regulatory frameworks designed to protect citizen privacy. This is particularly vital in an age where consumers are increasingly aware and protective of their data privacy rights, fueled by high-profile data breaches and growing concerns over surveillance and misuse of personal information.

The challenge lies in striking an appropriate balance between leveraging data for innovation and safeguarding individuals’ privacy. While data can lead to breakthroughs in fields such as healthcare, finance, and technology, the ethical imperative to respect personal privacy must not be overlooked. Ignoring privacy concerns can jeopardize public confidence in organizations and institutions that rely on data. Hence, it is essential for data scientists to adopt responsible practices, such as anonymization and data minimization, to ensure that while personal data is used for beneficial purposes, individuals’ rights are upheld and protected. This conscious approach fosters a sustainable environment for ethical data science that promotes trust and accountability.

In the realm of data science, ethical decision-making frameworks are essential tools that help practitioners navigate complex moral dilemmas. These frameworks encourage data scientists to systematically evaluate the potential consequences of their actions on various stakeholders, including individuals, communities, and organizations. The adoption of such a framework can lead to responsible AI development and usage, promoting fairness, accountability, and transparency.

One prominent framework is the Utilitarian approach, which emphasizes the greatest good for the greatest number. This perspective urges data scientists to evaluate the outcomes of their decisions, weighing the benefits against the potential harms. By striving to maximize overall well-being, data scientists can prioritize stakeholders’ welfare over sheer profit or convenience. However, one must be cautious, as this approach might overlook the rights of minorities or vulnerable populations, emphasizing the need for a balanced ethical outlook.

Another valuable framework is the Rights-based approach, which focuses on protecting individual rights and ensuring that data practices align with established ethical standards. This method compels data scientists to consider the implications of their work on privacy and consent, reinforcing the importance of safeguarding personal information. By prioritizing human rights, this framework aids in addressing ethical dilemmas that involve data collection and usage practices.

A third framework worth mentioning is the Virtue ethics perspective, which encourages practitioners to reflect on the moral character and integrity of their decisions. This approach posits that personal values must guide ethical conduct in data science, highlighting virtues such as honesty, responsibility, and respect. By advocating for virtuous behavior, data scientists can cultivate a culture of ethical awareness, ensuring that their work contributes positively to society.

Data scientists are encouraged to adopt these frameworks as guiding principles when confronting ethical dilemmas. By employing a structured decision-making approach, they can holistically evaluate their choices and prioritize ethical considerations, thereby upholding the integrity of their work while respecting stakeholders’ rights.

As organizations increasingly rely on data-driven insights, ensuring robust data security measures becomes imperative to protect sensitive information from misuse. Data security involves a combination of technical and procedural safeguards designed to prevent unauthorized access, breaches, and data leaks. Data scientists play a vital role in implementing these measures, as they are often responsible for handling and processing large volumes of data.

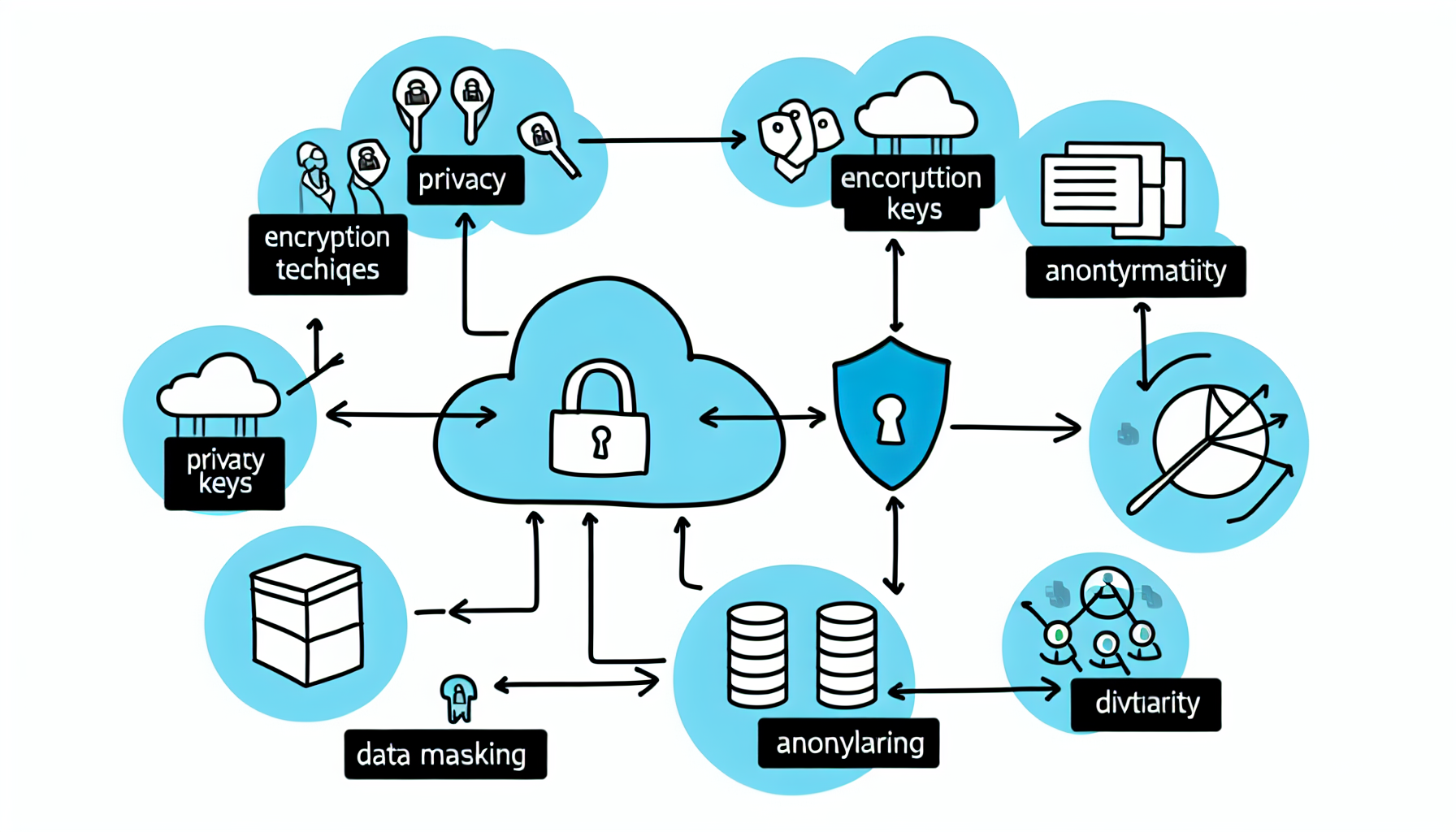

One of the key best practices for enhancing data security is the implementation of encryption techniques. By converting data into a coded format, encryption ensures that unauthorized individuals cannot access or interpret the information without the correct decryption key. This practice is especially crucial for sensitive data, such as personal identification information and financial records. Moreover, end-to-end encryption in data transmission provides an additional layer of security against potential interception.

Another essential measure is data anonymization, which involves modifying identifying information within datasets to prevent the identification of individuals. Techniques such as masking, pseudonymization, and aggregation enable data scientists to conduct analyses without exposing personal data, thereby reducing the risk of privacy violations. Anonymized data retains its analytical value while safeguarding individual privacy, making it a crucial practice in responsible data science.

In addition to encryption and anonymization, secure data storage solutions are paramount for ensuring data security. Utilizing cloud storage providers that implement strong security protocols, access control policies, and regular security audits can help mitigate risks. Organizations should also develop comprehensive data governance frameworks that outline data handling procedures, employee training, and incident response plans. Implementing these strategies can significantly strengthen data security and foster trust among stakeholders, aligning with ethical principles in data science.

The landscape of artificial intelligence (AI) regulations is evolving rapidly as governments and organizations seek to manage the ethical implications of data science practices. Among the most significant legal frameworks are the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA). These regulations set stringent guidelines to protect individual privacy and ensure transparency in how personal data is handled, which has significant implications for data science projects.

The GDPR, which came into effect in May 2018, applies to all organizations processing the personal data of individuals residing within the European Union. This regulation mandates that data scientists obtain explicit consent from individuals before processing their data, as well as giving them the right to access, modify, and delete their information. Furthermore, the GDPR requires organizations to implement adequate security measures to prevent data breaches, thus promoting ethical standards in data handling.

On the other hand, the CCPA, enacted in January 2020, focuses on data privacy rights for California residents. It grants consumers increased control over their personal information, allowing them to know what data is being collected, request its deletion, and opt-out of its sale. For data scientists, compliance with the CCPA necessitates a thorough understanding of the data lifecycle and proactive measures to ensure lawful data usage.

Both regulations highlight the importance of ethical considerations in data science. Non-compliance can lead to severe penalties, including substantial fines and reputational damage. As organizations continue to leverage AI technologies, adherence to these regulations is paramount. By prioritizing compliance, data scientists not only uphold ethical standards but also contribute to building public trust in AI systems, thereby fostering a responsible AI ecosystem that respects individual rights and promotes transparency in data practices.

In the rapidly evolving domain of data science, the role of transparent algorithms is paramount in ensuring ethical decision-making. Transparency in algorithms refers to the extent to which the inner workings and decision-making processes of these algorithms can be understood and scrutinized by stakeholders, including data scientists, policymakers, and the general public. When algorithms operate transparently, users gain insights into the factors influencing outcomes, which significantly enhances accountability and trust.

Transparent algorithms allow for a more informed analysis of how data is processed and decisions are made. This understanding is crucial not only to validate the fairness and accuracy of the predictions produced but also to identify any potential biases embedded within the algorithms. For instance, in the context of predictive policing, if law enforcement agencies employ opaque algorithms, they risk perpetuating existing societal biases, which can lead to discriminatory practices against certain communities. The lack of transparency in such scenarios can erode public trust and lead to unethical outcomes.

Moreover, organizations that utilize transparent algorithms are better equipped to comply with data regulations, such as the General Data Protection Regulation (GDPR). By making their algorithmic decision-making processes clear and accessible, organizations can provide individuals with explanations regarding how their data is being utilized, thus enhancing user autonomy and encouraging responsible AI practices.

In summary, the significance of transparent algorithms in data science cannot be overstated. They facilitate ethical decision-making by promoting accountability and providing essential insights into the decision-making processes of algorithms. As organizations increasingly rely on data-driven solutions, prioritizing transparency in algorithm design becomes indispensable to navigate the ethical landscape of data science effectively.

As the field of data science continues to evolve, the importance of identifying ethical implications in data projects has become increasingly paramount. Data-driven decision-making can lead to significant advancements, but it also raises critical ethical concerns that need thorough examination. Analysts must engage in a proactive approach to recognize potential ethical risks that may arise during various stages of their projects.

One significant ethical implication involves data privacy. Analysts often handle sensitive information, and the breach of this data can have severe consequences for individuals, including identity theft or loss of privacy. It is essential for data professionals to assess which data sources are being utilized and to ensure that they comply with relevant data protection regulations. For example, understanding the General Data Protection Regulation (GDPR) requirements can help organizations navigate the complexities of data handling and privacy rights effectively.

Another ethical concern pertains to algorithmic bias. Data-driven models can inadvertently perpetuate or amplify existing biases in societal structures. Analysts must critically evaluate their data sets for any historical bias that may influence outcomes. By employing techniques such as fairness-aware machine learning, analysts can mitigate these biases and ensure that their algorithms function successfully across diverse populations.

Furthermore, transparency plays a crucial role in fostering trust in AI systems. Stakeholders should be informed about how decisions are made within data projects and the data that have been utilized. This not only enhances accountability but also allows for the identification of ethical risks throughout the project’s lifecycle. Developing clear, transparent documentation is vital for addressing potential concerns and building confidence among users regarding data-driven solutions.

In light of these factors, it is imperative for analysts and organizations to cultivate a responsible approach to data projects by systematically identifying, assessing, and addressing ethical implications. This framework not only enhances the integrity of data science endeavors but also contributes positively to society as a whole.

In the realm of data science, ethical data handling is paramount to ensure the responsible use of AI and the safeguarding of data privacy. Implementing best practices in this area can greatly enhance the integrity of data workflows and foster trust among stakeholders. One of the foundational principles in ethical data handling is the gathering of informed consent. Organizations should ensure that individuals whose data is being collected are fully aware of the purpose, scope, and potential consequences of data usage. Clear communication regarding consent processes enhances transparency and empowers users to make informed decisions regarding their personal information.

Another crucial best practice is data minimization. Data scientists should collect only the data that is necessary for specific projects, thereby reducing the risk of inadvertently compromising sensitive information. By limiting data collection, organizations can also streamline their data management processes, minimizing potential security vulnerabilities and improving compliance with data protection regulations such as the GDPR. This approach not only aligns with ethical practices but also contributes to the responsible use of AI technologies.

Ongoing ethical training for data science teams is essential to ensure that all members are aware of the latest ethical standards and developments in data privacy laws. Regular workshops and training sessions can provide teams with the tools they need to navigate complex ethical dilemmas. Moreover, fostering a culture of ethical awareness within data science teams encourages open discussions about potential ethical issues and promotes accountability at all levels. Establishing an ethics review board can also provide an additional layer of oversight, ensuring that all data-driven projects adhere to ethical guidelines throughout their lifecycle.

By implementing these best practices—gathering informed consent, exercising data minimization, and engaging in continuous ethical training—organizations can significantly enhance their ethical data handling efforts, aligning their projects with the principles of responsible AI and data privacy.

The ethical landscape of data science has been shaped by numerous case studies highlighting both triumphs and failures. Successful implementations of ethical data science practices showcase how companies can derive value while respecting privacy and promoting fairness. For instance, Microsoft, in its commitment to responsible AI, established governance frameworks that guide its machine learning models and algorithms. The company emphasizes transparency and ethical considerations, such as bias mitigation and user consent, leading to enhanced trust in its products and services. This approach not only aligns with ethical obligations but also enhances user engagement and brand loyalty.

Conversely, failure to prioritize ethical practices can lead to significant reputational damage and legal repercussions. A notable example is Facebook’s Cambridge Analytica scandal, in which user data was improperly accessed and manipulated for political advertising without user consent. This incident not only undermined users’ trust but also prompted regulatory scrutiny that resulted in hefty fines. It underscores the importance of ethical data practices and the potential fallout of neglecting privacy and consent considerations.

Another case is that of Netflix, which utilizes data responsibly to enhance user experiences without compromising privacy. By employing anonymization techniques and aggregating user data, Netflix offers personalized recommendations while safeguarding individual identities. This responsible use of data has permissioned the company to maintain a competitive edge while fostering an ethical framework for data interaction.

These case studies exemplify the potential outcomes of ethical versus unethical data practices. As organizations navigate the complexities of data science, it is imperative to integrate ethical considerations into their strategies, ensuring responsible AI and data privacy. The lessons learned from these successes and failures will serve as guiding principles for the data science community moving forward.

In the realm of data science, a multitude of stakeholders plays an integral role in ensuring ethical practices and responsible utilization of data. Each stakeholder’s responsibilities vary, yet they collectively contribute to the overarching goal of maintaining data integrity and privacy.

Data scientists are at the forefront of this ethical landscape. Their role extends beyond mere data analysis; they are responsible for implementing rigorous ethical standards in their work. This includes ensuring transparency in algorithms, understanding the data sources, and avoiding biases that may skew results. By being mindful of the potential consequences their solutions may have on society, data scientists help to foster trust in data-driven decision-making.

Business leaders, too, play a crucial part in promoting ethical data science practices within organizations. They are tasked with setting a cultural tone that prioritizes ethical considerations alongside profitability. This involves instituting policies that encourage the responsible use of data, investing in training for employees, and supporting initiatives that protect consumer rights and data privacy. By aligning business strategies with ethical frameworks, leaders can ensure that their organizations remain accountable to the public.

Regulators and policymakers are essential stakeholders in the ethical data science landscape. Their responsibility lies in creating and enforcing legislation that governs data usage, privacy rights, and the ethical implications of artificial intelligence. By establishing a clear legal framework, regulators help to safeguard the public interest and hold organizations accountable for ethical breaches.

Lastly, the broader community, including individuals and advocacy groups, plays an influential role in voicing concerns regarding data ethics. Public awareness and advocacy can drive change and compel organizations to adopt more ethical practices. Engaging the public in conversations about data usage fosters a culture of accountability that ultimately benefits society.

The future of data ethics and responsible AI is poised to experience significant evolution as technological advancements continue to shape the landscape. Emerging trends indicate a growing emphasis on ethical frameworks tailored to data-driven decision-making processes. Organizations are increasingly recognizing the importance of not solely deriving insights but doing so in a manner that respects individuals’ privacy and upholds societal values. The concept of data ethics may expand beyond compliance with existing regulations to embrace the broader implications of AI deployment, such as bias mitigation, transparency, and accountability.

One notable trend is the integration of ethical considerations into machine learning practices. As AI systems become more complex and pervasive, ensuring that these systems operate fairly and transparently has become critical. Tools designed to audit algorithms for bias, coupled with diverse datasets that reflect the demographics of the affected population, will play a crucial role in shaping responsible AI development. This proactive approach can help prevent the reinforcement of existing social inequalities and foster trust between technology providers and users.

Additionally, public awareness of data privacy issues is at an all-time high. Individuals are becoming more discerning about how their data is collected and used, influencing organizations to adopt a more ethical stance in their data practices. This shift in consumer sentiment is propelling businesses toward the creation of robust governance systems that prioritize data privacy and ethical considerations. Technological innovations are expected to support these efforts, such as advanced encryption techniques and decentralized data storage solutions that empower users to control their information.

In conclusion, the trajectory of data ethics and responsible AI will be determined by collaborative efforts across industries, regulatory bodies, and the public. By proactively addressing new challenges and adopting emerging technologies, stakeholders can contribute to a future where ethical data practices are embedded in the very fabric of data science.

As the use of data science continues to escalate across various sectors, establishing rigorous methodologies for testing and measuring the effectiveness of ethical data practices becomes crucial. This involves a systematic approach to evaluating how well data-driven solutions align with ethical principles, particularly regarding privacy, bias, and transparency. By doing so, organizations can ensure that their applications of artificial intelligence (AI) do not inadvertently harm individuals or perpetuate inequalities.

One effective framework for measuring the ethical implications of data use involves qualitative and quantitative metrics. Quantitative metrics could include evaluating the accuracy and fairness of algorithms by assessing outcomes across diverse demographic groups. For example, organizations can utilize bias detection tools that analyze model predictions to identify potential disparities in performance among different populations. Additionally, tracking the frequency and context of data breaches or instances of misuse can provide insights into the robustness of data privacy measures implemented.

Qualitative assessments, on the other hand, involve gathering feedback from stakeholders, including users and communities affected by the data use. Conducting interviews and surveys can reveal insights into individuals’ perceptions of how their data is being handled, enabling organizations to address any lingering ethical concerns. Furthermore, engaging in transparent dialogue with stakeholders promotes accountability and fosters trust.

Iterative improvement is an essential aspect of ethical data practices. Organizations should continuously refine their methodologies based on the outcomes of their assessments. This might include revisiting data governance policies, enhancing training for data scientists on ethical considerations, or adjusting algorithm parameters to reduce bias. Ultimately, employing systematic testing and metrics to evaluate ethical data practices leads to more responsible AI implementations and bolsters data privacy, creating a more equitable landscape for all users.

Find Scholarships Offered by Countries Worldwide for Your Academic Goals.

Chose where you want to study, and we will let you know with more updates.