In the realm of data analysis and machine learning, dimensionality reduction is a pivotal technique utilized to simplify complex datasets. This process involves reducing the number of features or variables under consideration, thereby enabling easier data visualization and improved model performance. By streamlining a dataset, practitioners can eliminate noise, reduce computation time, and often enhance the accuracy of machine learning models.

The significance of dimensionality reduction cannot be overstated, especially in an era where data collection has surged exponentially. Numerous variables may lead to the “curse of dimensionality,” which refers to various phenomena that arise when analyzing and organizing data in high-dimensional spaces. As dimensions increase, the volume of the space increases so quickly that the available data becomes sparse, making it challenging to identify patterns. This often results in models that overfit or fail to generalize well to unseen data.

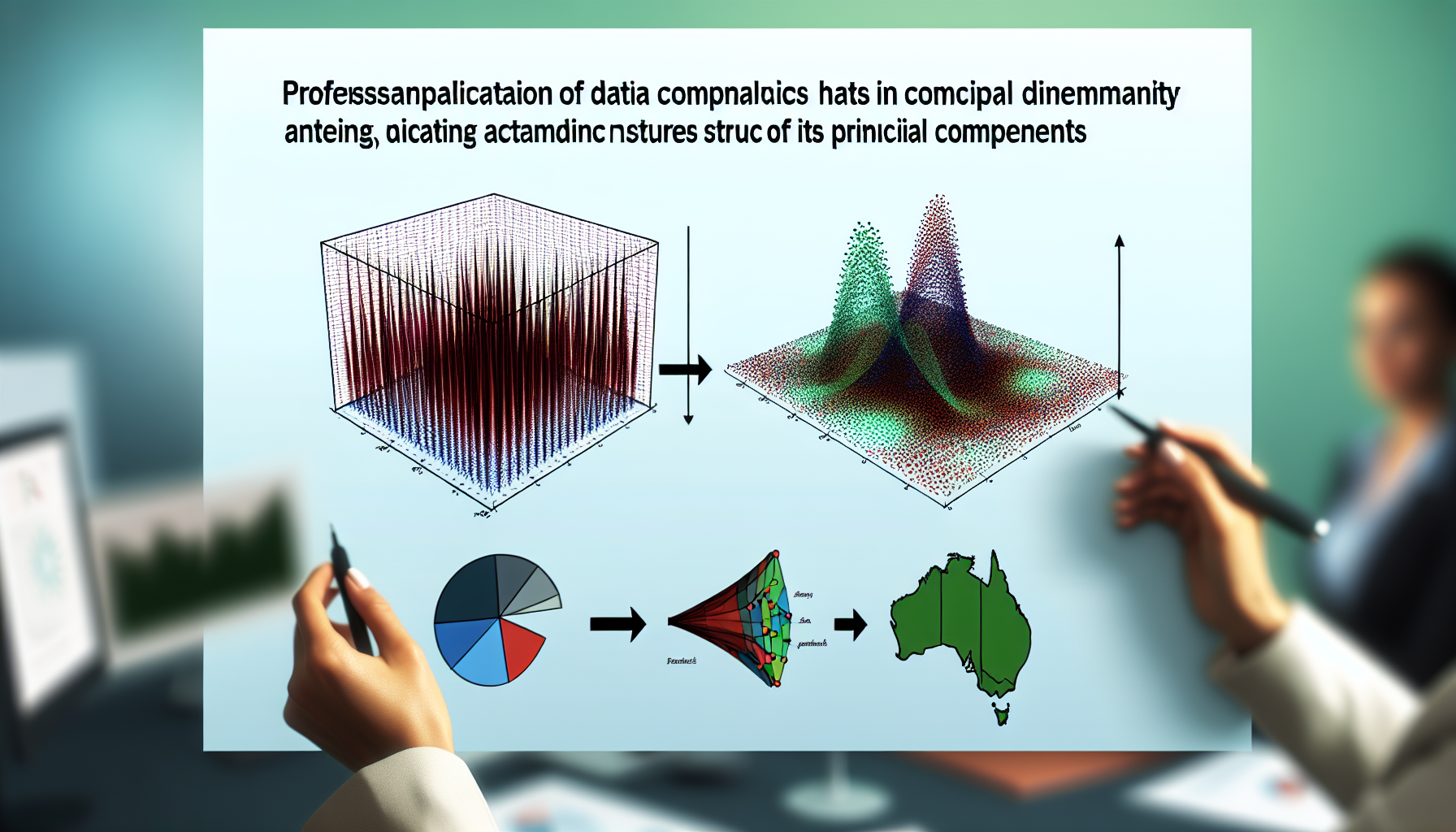

Dimensionality reduction techniques, such as Principal Component Analysis (PCA), factor analysis, and t-distributed Stochastic Neighbor Embedding (t-SNE), serve as essential tools for mitigating these challenges. They transform high-dimensional data into a lower-dimensional space while retaining the essential relationships and structures inherent in the data. By focusing on the most significant features, analysts can uncover underlying patterns that might otherwise remain obscured. This not only facilitates better data visualization but also supports more efficient machine learning processes.

In summary, the importance of dimensionality reduction in data analysis cannot be overlooked. By employing these techniques, analysts and data scientists can improve the interpretability of their datasets, leading to enhanced insights and more reliable model performance. Understanding these methods is fundamental for anyone looking to maximize the potential of their data in machine learning applications.

Principal Component Analysis (PCA) is a powerful statistical technique widely utilized for dimensionality reduction, effectively transforming data while preserving variance. At its core, PCA emphasizes converting correlated variables into a set of uncorrelated variables known as principal components. This process simplifies the dataset while retaining as much information as feasible.

The mathematical foundation of PCA involves linear algebra and the computation of eigenvalues and eigenvectors from the covariance matrix of the dataset. Each eigenvector represents a principal component, and the corresponding eigenvalue indicates the variance captured by that component. The foremost components capture the highest variance, thereby allowing for effective data representation in fewer dimensions.

One of the defining features of PCA is its ability to reduce noise in data by emphasizing the most relevant components, thereby aiding in enhancing the performance of different machine learning algorithms. Through this transformation, PCA not only aids in data visualization but also assists in mitigating the curse of dimensionality where traditional algorithms may struggle due to an overwhelming number of features.

When applying PCA, the initial step involves standardizing the dataset. This standardization process ensures that each feature contributes equally to the analysis, avoiding situations where features with larger scales dominate. Following standardization, practitioners compute the covariance matrix and derive the eigenvalues and eigenvectors. These components are then sorted by their eigenvalues in descending order to prioritize those that retain the most variance.

PCA’s relevance extends to various fields, including finance, genomics, and image processing, making it a staple technique in the toolbox of data scientists and analysts. Its unsupervised nature enables the discovery of underlying patterns without requiring prior labeling of the data, thereby offering a robust foundational method for exploratory data analysis and subsequent analytical tasks.

Principal Component Analysis (PCA) is an effective dimensionality reduction technique widely used in data science and machine learning. Understanding the step-by-step mechanics of PCA is crucial for successfully implementing this method. The initial step involves standardizing the dataset to ensure that each feature contributes equally to the analysis. This is achieved by subtracting the mean of each feature and dividing by its standard deviation, which transforms the dataset into a format where each feature has a mean of zero and a variance of one.

Following standardization, the next step is to compute the covariance matrix of the standardized data. The covariance matrix captures the relationships between pairs of features, helping to identify the directions along which the data varies the most. The covariance values indicate whether the features increase or decrease together and can help determine the way data can be reduced without significant loss of information.

Once the covariance matrix is constructed, the focus shifts to eigenvalue decomposition. This process involves calculating the eigenvalues and eigenvectors of the covariance matrix. The eigenvalues are scalar values that represent the amount of variance captured by each corresponding eigenvector. The eigenvectors, on the other hand, denote the directions of maximum variance. By sorting the eigenvalues in descending order, the top eigenvectors associated with the largest eigenvalues can be identified. These vectors form the basis of the new dimensionality space.

The final step is to project the original standardized data onto the space defined by the selected eigenvectors. This projection results in a reduced representation of the dataset that retains most of its original variance. Consequently, the entire process effectively reduces the dimensionality of the data while minimizing information loss, making PCA a powerful technique for simplifying complex datasets and uncovering underlying patterns.

Principal Component Analysis (PCA) has emerged as a pivotal technique in data visualization, particularly for transforming high-dimensional datasets into more interpretable two or three-dimensional representations. As datasets grow in complexity and dimensionality, the challenge of visualizing relationships among features becomes increasingly important. PCA addresses this challenge by reducing dimensions while preserving as much variance as possible, making it easier to glean insights from large volumes of data.

A common application of PCA lies in the field of image processing. For instance, when processing facial images, PCA can help in identifying and visualizing facial features by distilling this information into principal components. This reduction not only simplifies storage and reduces computation time but also elucidates relationships among various facial attributes. Moreover, PCA plays a crucial role in clustering applications, where it can reveal inherent groupings within data, enhancing our understanding of different classes based on visual cues.

Another area where PCA is widely used for data visualization is in genomics. Researchers often deal with high-dimensional data such as gene expression profiles. By applying PCA, they can represent complex genetic datasets in a more manageable form, enabling them to identify patterns and correlations among genes. This has critical implications for personalized medicine, where understanding the genetic basis of diseases can lead to targeted therapies.

Moreover, PCA’s ability to project data points into lower dimensions allows for better visualization in various business applications. For example, marketers can utilize PCA to analyze consumer behavior, offering insights into customer preferences and segmentation. By visualizing customer data in reduced dimensions, businesses can better tailor their marketing strategies.

In conclusion, PCA is not only a fundamental technique for dimensionality reduction but also a powerful tool for improving data visualization. Its applications across various fields highlight its versatility and importance in making complex data more accessible and understandable.

Exploratory Data Analysis (EDA) serves as a crucial step in the process of data analysis, allowing analysts to gain insights and understand the underlying structure of their datasets. Principal Component Analysis (PCA) plays a significant role in this preliminary phase by transforming high-dimensional data into lower-dimensional representations, thus facilitating a clearer interpretation of patterns and relationships within the data. Through the reduction of dimensions, PCA enables individuals to visualize the inherent structure while retaining the most essential characteristics of the original dataset.

PCA achieves this by identifying the directions, or principal components, in which the data varies the most. These components become new axes for the dataset, allowing for a more interpretable view. For instance, plotting data points along the first two or three principal components provides a simplified yet powerful way to visualize complex structures, such as clusters or trends. This visualization aids analysts in identifying outliers, correlations, and patterns that may not be readily apparent in the original high-dimensional space.

Moreover, PCA is not just limited to visualizations; it also enhances the understanding of the features contributing to the variance in the data. By analyzing the loading scores of the original variables on these principal components, data scientists can ascertain which features carry the most weight in representing the dataset. This information can lead to informed feature selection and engineering, thereby improving the overall model performance.

Incorporating PCA into the EDA process empowers analysts to derive meaningful insights from their data more effectively. It highlights relationships among features and assists in uncovering hidden patterns that are vital for the subsequent stages of data analysis. Therefore, leveraging PCA during early exploratory efforts is essential for achieving a comprehensive understanding of complex datasets.

Principal Component Analysis (PCA) serves as a powerful statistical tool for feature selection, particularly in high-dimensional datasets where the number of features can lead to overfitting and increased computational burden. The primary objective of PCA is to transform the original feature set into a new set of uncorrelated variables known as principal components, which capture most of the variability present in the data. This is achieved by identifying the directions (components) along which the variance of the data is maximized.

The key to leveraging PCA for effective feature selection lies in determining how many principal components should be retained. It is generally advisable to choose components that account for a substantial percentage of the total variance—often cited as 70-90%. One common approach for deciding the number of components is to use a scree plot, which visually represents the eigenvalues associated with each principal component. The ‘elbow’ point on the curve indicates the point beyond which the additional components contribute diminishing returns in explaining variance.

When applying PCA, practitioners should interpret the loadings of each principal component, as they indicate the contribution of the original features to the new components. Features with high absolute loading values can be considered important, thus facilitating a better understanding of the underlying structure in the data. Additionally, it is crucial to standardize the features before applying PCA, particularly if they are measured on different scales. This ensures that the PCA results are not disproportionately influenced by features with larger scales.

In conclusion, effective feature selection using PCA not only aids in reducing dimensionality but also enhances model performance by focusing on the most relevant components. By thoroughly analyzing variance contributions and interpreting component loadings, researchers and data analysts can refine their datasets, paving the way for more accurate predictive models.

Principal Component Analysis (PCA) is a widely utilized technique for dimensionality reduction, yet it is not without its limitations and challenges. One prominent concern is PCA’s sensitivity to outliers. Since PCA aims to maximize the variance captured in the principal components, the presence of outliers can disproportionately influence the calculations. These data points can skew the principal component directions, leading to misleading results and an inaccurate representation of the underlying data structure. Researchers must carefully consider data quality and the potential impact of outliers when applying PCA.

Another critical limitation of PCA is its assumption of linearity. PCA seeks to find linear combinations of the original features that capture the most variance, which poses a challenge when working with non-linear datasets. In scenarios where the relationship between features is highly non-linear, PCA may fail to accurately represent the data’s inherent structure. As a result, alternative techniques that can handle non-linearity, such as t-SNE or kernel PCA, may be more appropriate in such instances.

Furthermore, PCA requires the original features to be scaled appropriately, particularly when they are measured in different units or have varying scales. Without proper normalization, the components derived from PCA could be misleading, as features with larger scales can dominate the variance capture process. This necessitates careful preprocessing, which can add complexity to the analysis. Additionally, PCA does not provide a straightforward interpretation of the principal components, as these components are linear combinations of the original variables. Thus, understanding what each component signifies in relation to the original features can become challenging.

In conclusion, while PCA is a powerful dimensionality reduction tool, its limitations must be acknowledged. The technique’s sensitivity to outliers, linearity assumptions, and dependency on feature scaling can affect its effectiveness. Therefore, it is essential to evaluate the specific characteristics of the dataset at hand and consider alternative methods when necessary.

While Principal Component Analysis (PCA) is widely recognized for its efficacy in reducing dimensionality, it is not the only technique available for this purpose. Multiple alternatives exist, each with unique advantages and applicability depending on the specific characteristics of the dataset and the analysis objectives. In this regard, t-Distributed Stochastic Neighbor Embedding (t-SNE), Uniform Manifold Approximation and Projection (UMAP), and Linear Discriminant Analysis (LDA) are notable methodologies worth exploring.

t-SNE is particularly adept at visualizing high-dimensional data. It excels in preserving local structures while effectively mapping them into lower dimensions, making it a favored choice for tasks involving clustering and exploratory data analysis. An advantage of t-SNE is its ability to represent complex, non-linear relationships that are often present in high-dimensional datasets. This characteristic makes it an ideal method for visualizing intricate patterns, although it is worth noting that t-SNE can be computationally intensive and sensitive to parameter choices.

On the other hand, UMAP presents a promising alternative by focusing on maintaining both local and global structures in data. UMAP operates based on the principles of algebraic topology and provides faster performance than t-SNE, especially for larger datasets. Its versatility makes it suitable for a range of applications, from image processing to genomics. Notably, UMAP has been gaining traction in recent years as a powerful tool not just for visualization but also for clustering and classification tasks.

Lastly, Linear Discriminant Analysis (LDA) is another technique that serves a distinct purpose. Unlike PCA, which is unsupervised, LDA is a supervised method that seeks to find a feature subspace that maximizes class separability. It is commonly utilized in scenarios where class labels are available and is particularly effective in classification tasks. LDA can often lead to a significant reduction in dimensionality while preserving the discriminative information, thereby enhancing subsequent analytical efforts.

Dimensionality reduction techniques are essential in data preprocessing as they simplify data analysis while preserving its essential characteristics. Among these techniques, Principal Component Analysis (PCA) is the most widely recognized. However, other methods such as t-Distributed Stochastic Neighbor Embedding (t-SNE), Uniform Manifold Approximation and Projection (UMAP), and Linear Discriminant Analysis (LDA) also present unique advantages depending on the context of their application.

PCA excels in scenarios where the data is linearly correlated. It works by identifying the directions (principal components) that maximize variance in high-dimensional space, making it particularly useful in tasks that require feature extraction before implementing machine learning algorithms. This technique is highly efficient computationally, thus suitable for large datasets. However, PCA may struggle with complex data structures, such as those with non-linear relationships, where insights from the original variables can be lost.

On the other hand, t-SNE is specifically designed for visualizing high-dimensional data in lower dimensions, typically two or three. This technique excels at clustering by preserving local distances, making it ideal for exploratory data analysis. However, t-SNE can be computationally intensive and often requires careful tuning of its parameters to yield meaningful results, which limits its use in embedded systems or real-time applications.

UMAP is another powerful tool that balances the strengths of both PCA and t-SNE. It is computationally efficient while preserving both local and global structures in the data, making it suitable for tasks that demand both visualization and further machine learning processes. Unlike PCA, UMAP can handle non-linear relationships effectively, making it a more versatile choice when working with complex datasets.

Ultimately, the selection of a dimensionality reduction technique should depend on the specific dataset characteristics and the objectives of the analysis. Each technique has its unique strengths and weaknesses, therefore understanding the nature of the data is crucial in guiding the choice of the appropriate method for optimal results.

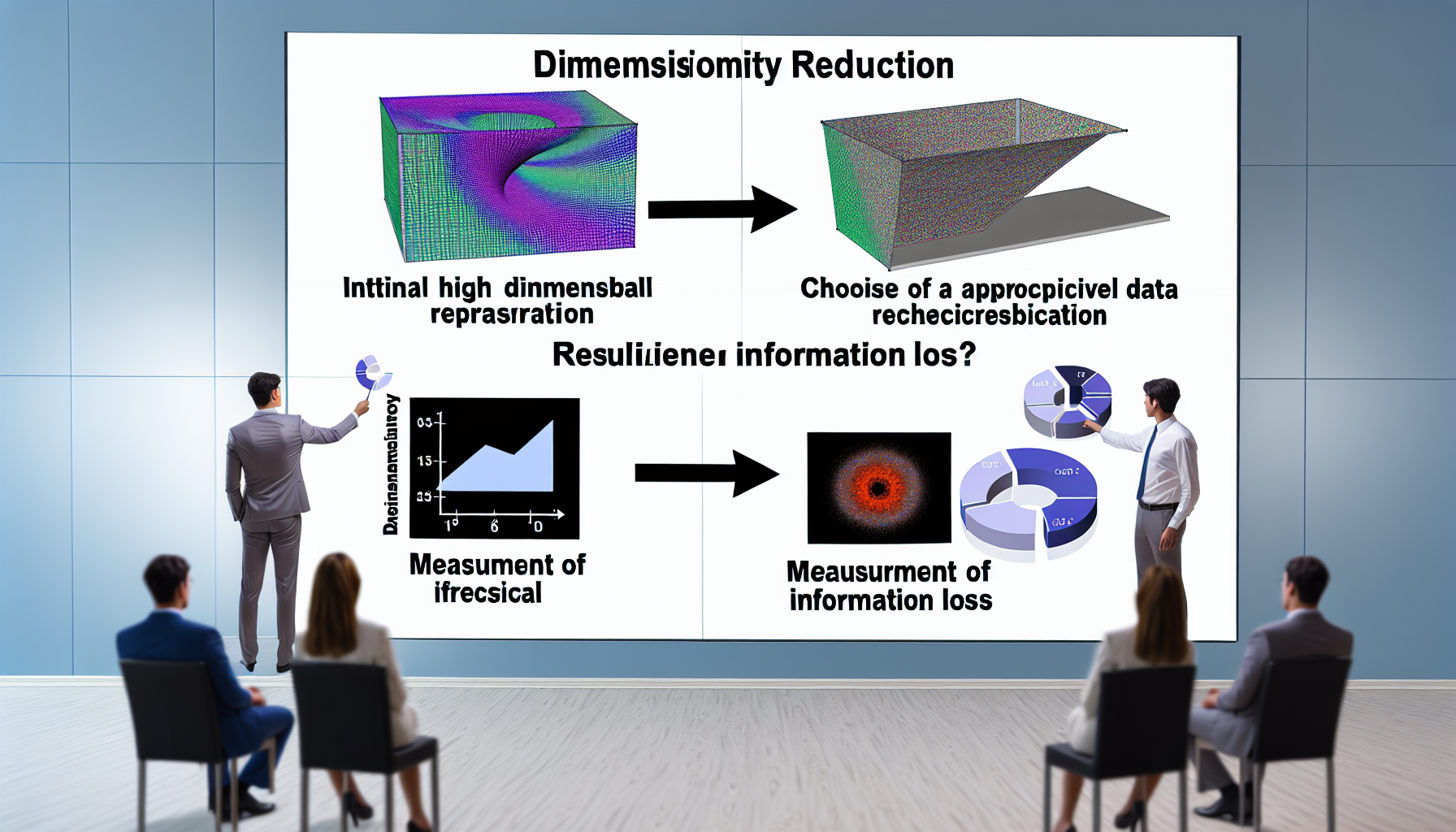

Assessing the effectiveness of dimensionality reduction techniques is crucial to ensure they serve their intended purposes without compromising data integrity. One prominent metric used in this evaluation is reconstruction loss, which quantifies how accurately the original data can be reconstructed from the lower-dimensional representation. In applications like Principal Component Analysis (PCA), a lower reconstruction loss indicates that more of the original variance is captured in fewer dimensions, thus showcasing the technique’s efficiency. This metric allows practitioners to determine the optimal number of dimensions to retain while minimizing information loss.

Another important factor is explained variance, which provides insight into how much information is retained in the reduced dataset. Explained variance values typically range between 0 and 1, where a value closer to 1 implies high effectiveness in preserving original data characteristics. When conducting dimensionality reduction, it’s vital to examine the cumulative explained variance to understand how many components are necessary for a satisfactory level of data retention. This measure is particularly beneficial for comparing multiple dimensionality reduction techniques and informing decisions regarding which method to utilize.

Additionally, visual inspection often plays a significant role in assessing dimensionality reduction effectiveness, especially in clustering and classification tasks. Scatter plots, for instance, can be utilized to visualize the relationships between data points after applying a dimensionality reduction technique. By examining clusters formed in these visualizations, analysts can assess how well the data has been separated and how clear the classifications appear. This qualitative analysis complements quantitative metrics by offering an intuitive understanding of the reduced data’s structure. Therefore, leveraging a combination of reconstruction loss, explained variance, and visual inspections provides a comprehensive approach to evaluating the effectiveness of dimensionality reduction methodologies.

Successfully applying dimensionality reduction techniques requires careful consideration of several factors. First, pre-processing data is crucial for achieving optimal results. Clean your dataset by handling missing values, removing duplicates, and ensuring consistent formatting. Standardization or normalization of features is often necessary, especially for methods like Principal Component Analysis (PCA), which are influenced by the scale of the data. Transforming features to a common scale can help enhance the effectiveness of the dimensionality reduction process.

Next, retaining important features should be a priority. While the primary goal of dimensionality reduction is to simplify data without losing critical information, it is essential to identify key variables that significantly contribute to the underlying patterns within the dataset. Techniques such as feature importance ranking or correlation analysis can aid in determining which features to retain. Additionally, understanding the domain of the data can guide the selection of important features, as knowledge of the subject matter often reveals relationships not captured by automated methods.

When applying dimensionality reduction techniques, it is also imperative to validate results rigorously. Split your dataset into training and testing subsets to evaluate model performance effectively. Employ cross-validation for a more robust assessment of model generalization and resilience to overfitting. Analyze the results using both qualitative and quantitative methods. Visualizing data projections through scatter plots can provide immediate insights into how well the reduced dimensions represent the original data. Furthermore, consider comparing the performance of different dimensionality reduction techniques to ascertain which best fits your specific use case.

By implementing these practical tips, practitioners can navigate the complexities associated with dimensionality reduction and improve their data analysis processes significantly.

Dimensionality reduction techniques, particularly Principal Component Analysis (PCA), are pivotal in various fields including finance, healthcare, and image processing. These techniques serve to simplify datasets, allowing for more efficient data analysis and visualization. A notable case study can be observed in the finance sector, where investments rely heavily on data analysis. Here, a well-known hedge fund implemented PCA to reduce the dimensionality of their market data, consisting of numerous financial indicators. By focusing on a limited number of principal components, analysts were able to identify key factors affecting stock prices. This resulted in improved predictive accuracy and more informed investment decisions, demonstrating how dimensionality reduction can streamline complex data sets for enhanced outcomes.

In healthcare, dimensionality reduction techniques have demonstrated significant promise, particularly in genomic data analysis. A prominent research study utilized PCA to analyze microarray gene expression data involving thousands of genes. By reducing the dataset to a smaller set of principal components, researchers identified distinct patterns associated with various diseases. This application not only expedited the research process but also provided actionable insights that could lead to more targeted therapies, showcasing PCA’s potential in facilitating breakthroughs in healthcare.

Moreover, the field of image processing leverages dimensionality reduction heavily to optimize data handling. A tech company developed a facial recognition system that integrated t-distributed Stochastic Neighbor Embedding (t-SNE) alongside PCA to improve image feature extraction. The dimensionality reduction allowed the system to analyze images swiftly while maintaining high accuracy, thus paving the way for real-time facial recognition applications. By employing these techniques, organizations are able to manage vast amounts of complex data effectively, affirming the relevance and applicability of dimensionality reduction methods in diverse industries.

Dimensionality reduction plays a pivotal role in the realm of machine learning, addressing the challenges posed by high-dimensional datasets. By condensing the data into a lower-dimensional space, essential patterns and structures can be identified, thereby improving the performance of various algorithms. The integration of techniques such as Principal Component Analysis (PCA) has enabled practitioners to enhance data visualization, reduce computation times, and mitigate the risks of overfitting. As the technological landscape continues to evolve, the relevance of these techniques only grows.

Looking towards the future, several emerging trends in dimensionality reduction warrant attention. One notable advancement is the increase in the use of nonlinear methods such as t-Distributed Stochastic Neighbor Embedding (t-SNE) and UMAP (Uniform Manifold Approximation and Projection). These techniques allow for more nuanced representations of data, capturing intricate relationships that linear methods may overlook. Such methods are particularly valuable in fields like bioinformatics and image analysis where complex structures abound.

Additionally, the incorporation of deep learning models into dimensionality reduction is gaining traction. Autoencoders, for instance, have shown the capability of preserving essential features while compressing the input data. This fusion of deep learning with traditional dimensionality reduction techniques marks a significant leap forward, granting practitioners the ability to handle vast amounts of data with greater efficiency and efficacy.

As practitioners and researchers continue to explore these advanced techniques, the horizon of possibilities expands. Embracing the ongoing developments in dimensionality reduction not only enriches one’s understanding but also facilitates improved methodologies across various disciplines. The journey toward mastering these tools promises to be both enlightening and impactful for data-driven decision-making. Exploration in this field is encouraged, as the landscape is rich with opportunities for innovation and refinement.

Looking to advertise, promote your brand, or explore partnership opportunities?

Reach out to us at

[email protected]

Chose where you want to study, and we will let you know with more updates.